Chenyu (Monica) Wang

Massachusetts Institute of Technologywangchy@mit.edu

About me

Research

Publications & Preprints

Education

Selected Awards

Internship Experience

Services

Miscellaneous

About Me

I am a fourth-year PhD candidate at MIT CSAIL, advised by Prof. Tommi Jaakkola. My research interests lie broadly in deep generative models, reinforcement learning, multi-modal learning, and AI for science. During my PhD, I was an research intern at Meta FAIR and Genentech. My research has been supported by the Citadel GQS PhD Fellowship.

Before my PhD, I obtained my Bachelor’s degree from Tsinghua University, working as a research assistant in Tsinghua Universal Machine Learning (THUML) Group under the supervision of Mingsheng Long. I was also fortunate to work as a research intern with Mengdi Wang at Princeton University and with Cyrus Shahabi at University of Southern California.

Google Scholar / LinkedIn / Twitter

Resume (Updated in Oct 2025)

Research

My research focuses on developing controllable and efficient generative models, via reinforcement learning, multi-modal learning, and representation learning. I work across various application domains, including language models, vision, and scientific data (e.g. biochemistry). My recent work explores:

- Reinforcement learning, reasoning, and inference-time alignment for deep generative models and language models. [8, 10, 13, 14, 18, 21]

- Multi-modal learning, especially multi-modal representations and their interactions with generative models. [4, 6, 7, 9, 15, 16, 19, 20]

- Enhancing biochemistry discovery through generative models and agents. [3, 5, 11, 12, 17]

Publications & Preprints

Full publication list can be found on Google Scholar.

(* Equal Contribution)

|

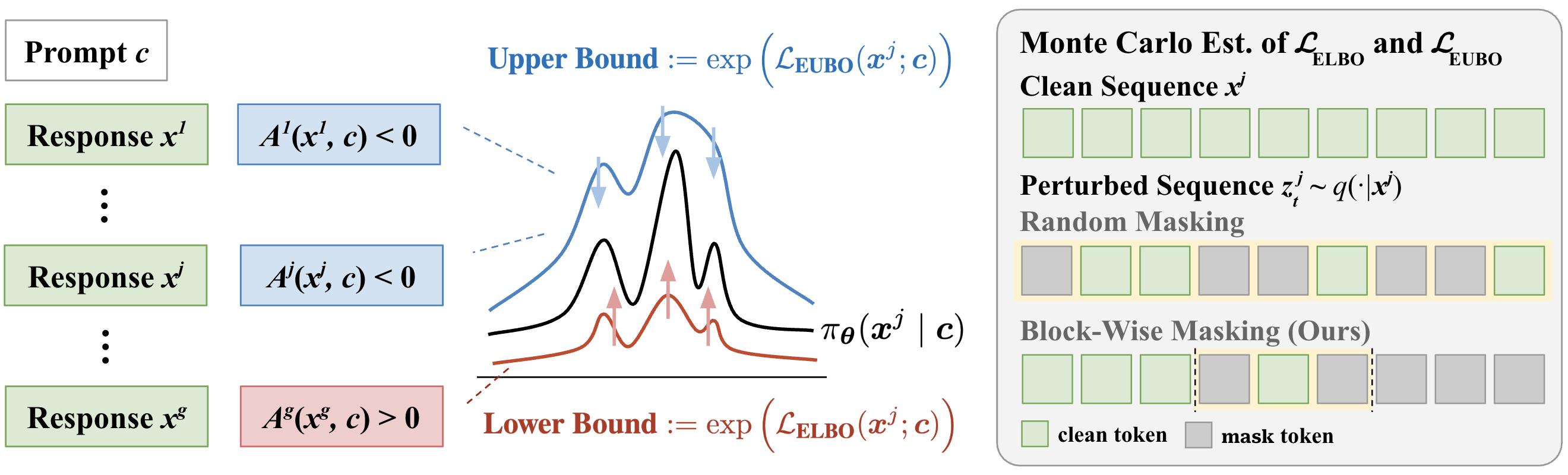

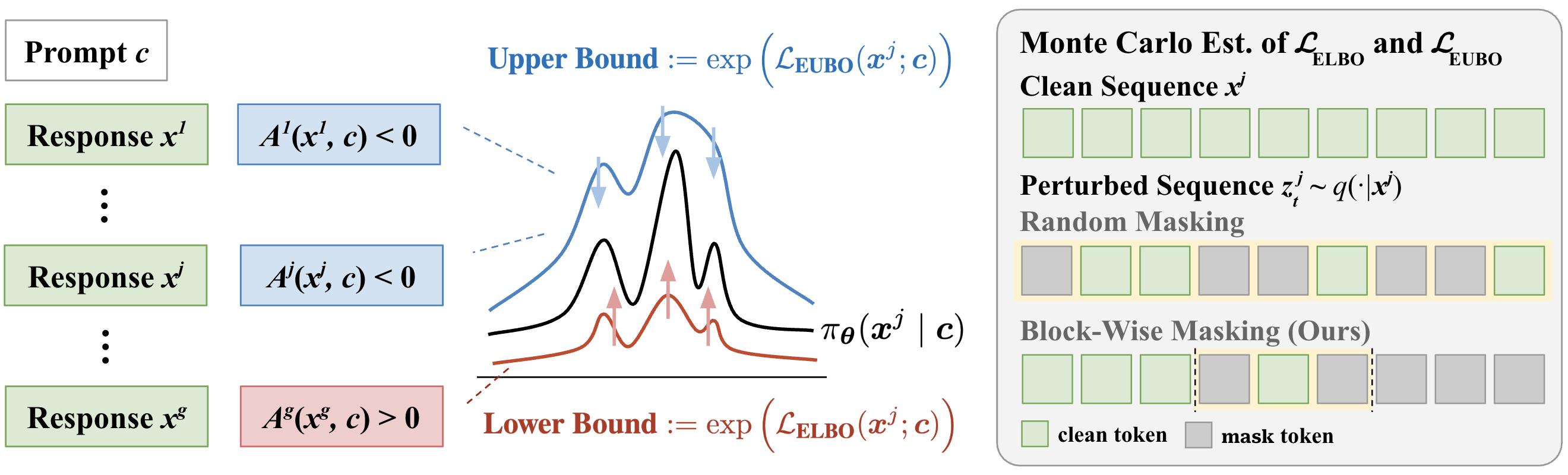

21. SPG: Sandwiched Policy Gradient for Masked Diffusion Language Models. Chenyu Wang, Paria Rashidinejad, DiJia Su, Song Jiang, Sid Wang, Siyan Zhao, Cai Zhou, Shannon Zejiang Shen, Feiyu Chen, Tommi Jaakkola, Yuandong Tian, Bo Liu. International Conference on Learning Representations. ICLR 2026. [Paper] [Code] [Project Webpage] |

|

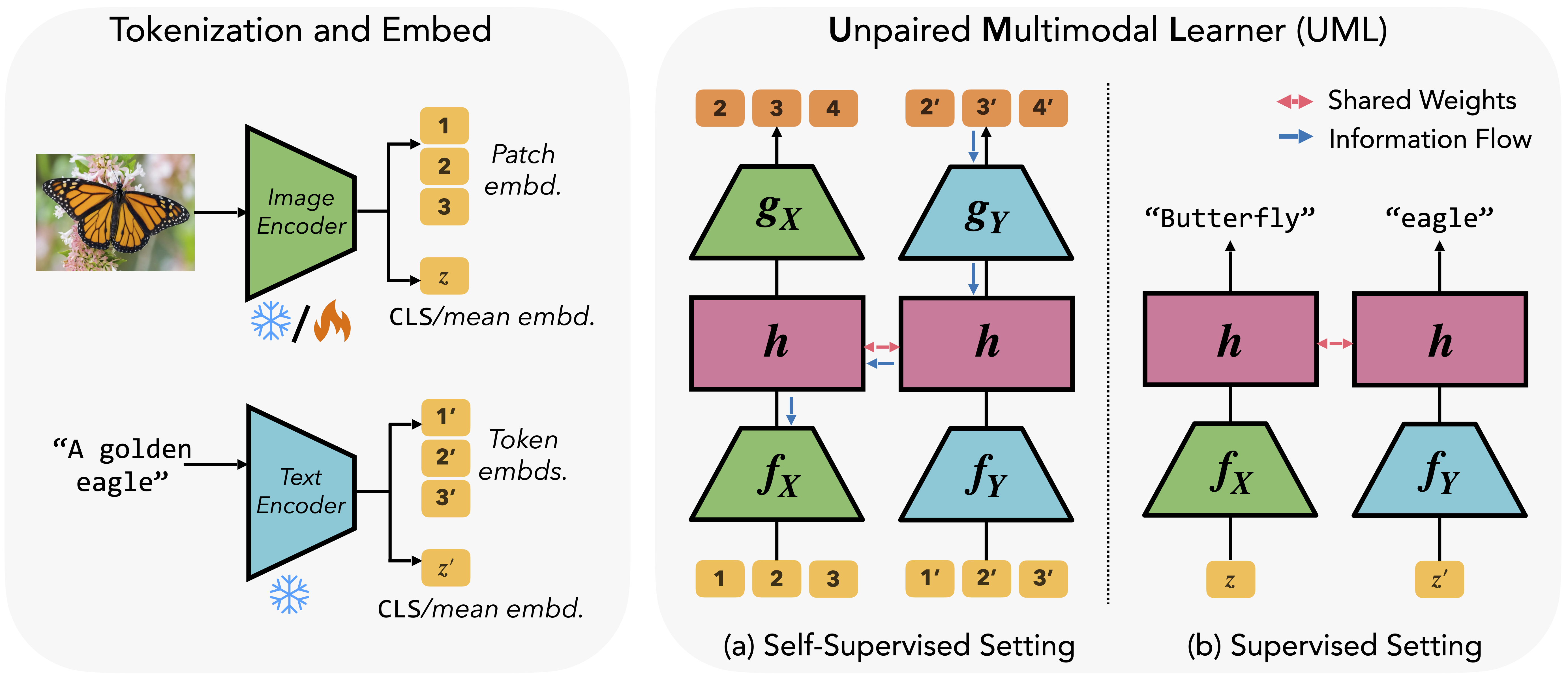

20. Better Together: Leveraging Unpaired Multimodal Data for Stronger Unimodal Models. Sharut Gupta, Shobhita Sundaram, Chenyu Wang, Stefanie Jegelka, Phillip Isola. International Conference on Learning Representations. ICLR 2026. [Paper] [Code] [Project Webpage] |

|

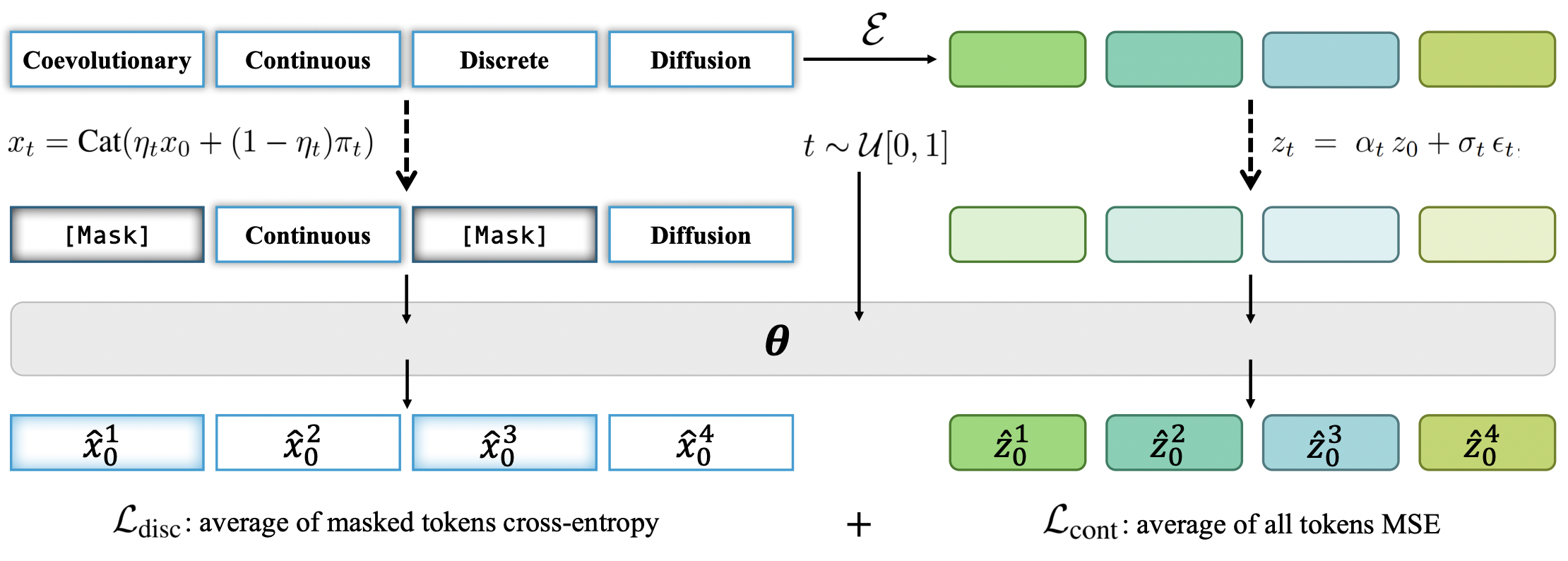

19. Coevolutionary Continuous Discrete Diffusion: Make Your Diffusion Language Model a Latent Reasoner. Cai Zhou, Chenxiao Yang, Yi Hu, Chenyu Wang, Chubin Zhang, Muhan Zhang, Lester Mackey, Tommi Jaakkola, Stephen Bates, Dinghuai Zhang. Preprint. [Paper] |

|

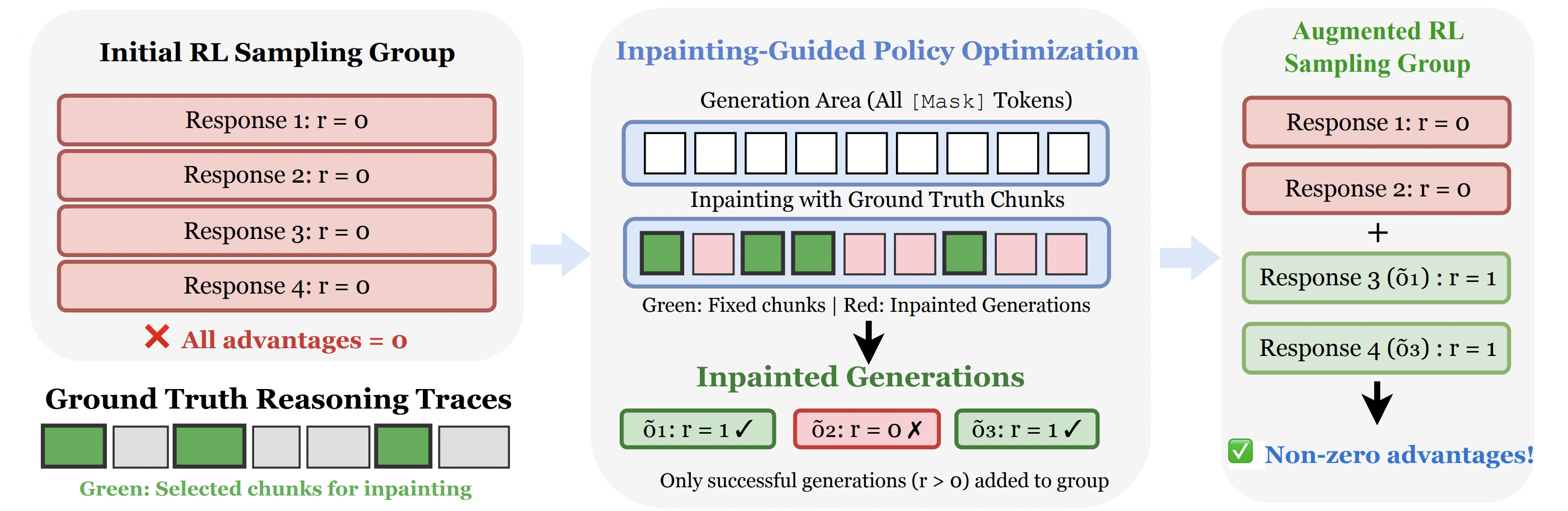

18. Inpainting-Guided Policy Optimization for Diffusion Large Language Models. Siyan Zhao, Mengchen Liu, Jing Huang, Miao Liu, Chenyu Wang, Bo Liu, Yuandong Tian, Guan Pang, Sean Bell, Aditya Grover, Feiyu Chen. International Conference on Learning Representations. ICLR 2026. [Paper] |

|

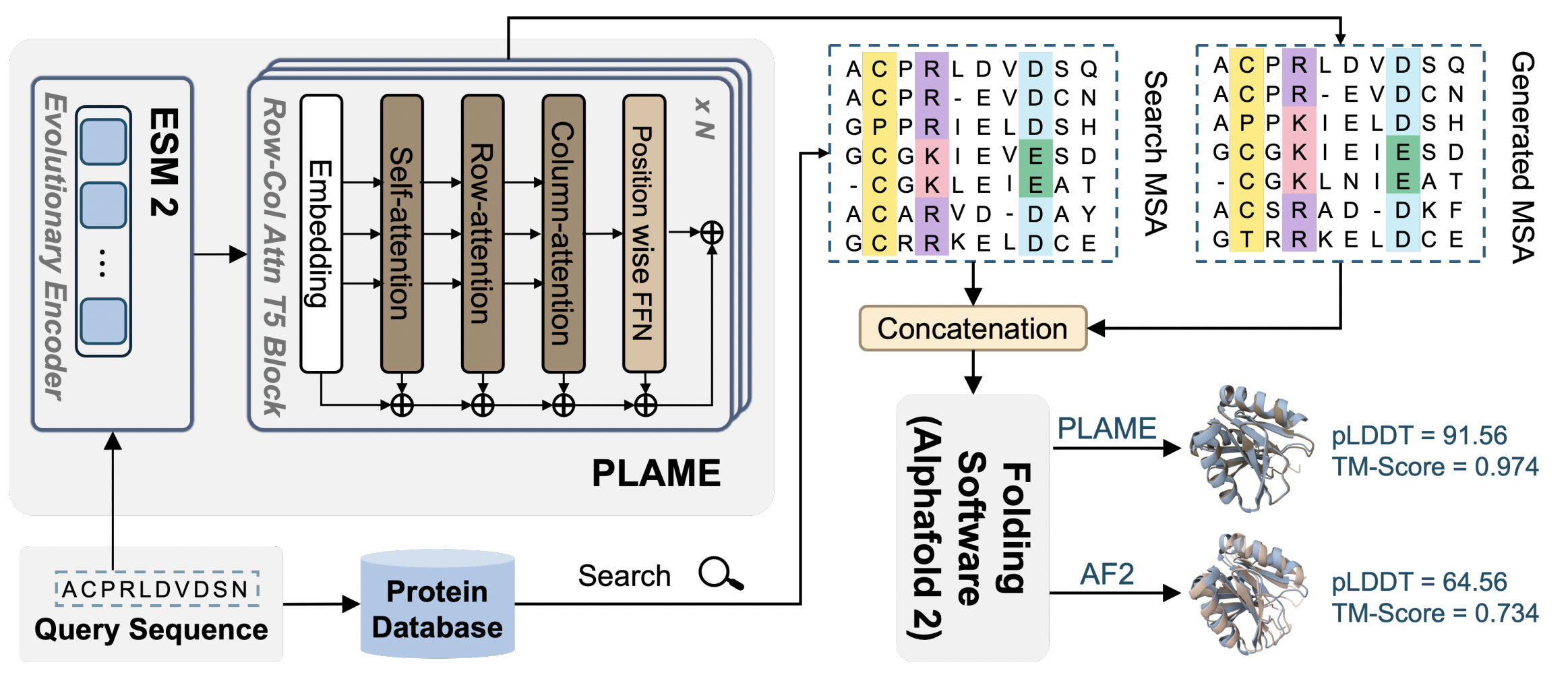

17. Lightweight MSA Design Advances Protein Folding From Evolutionary Embeddings. Hanqun Cao*, Xinyi Zhou*, Zijun Gao*, Chenyu Wang, Xin Gao, Zhi Zhang, Chunbin Gu, Ge Liu, Pheng-Ann Heng. Preprint. [Paper] |

|

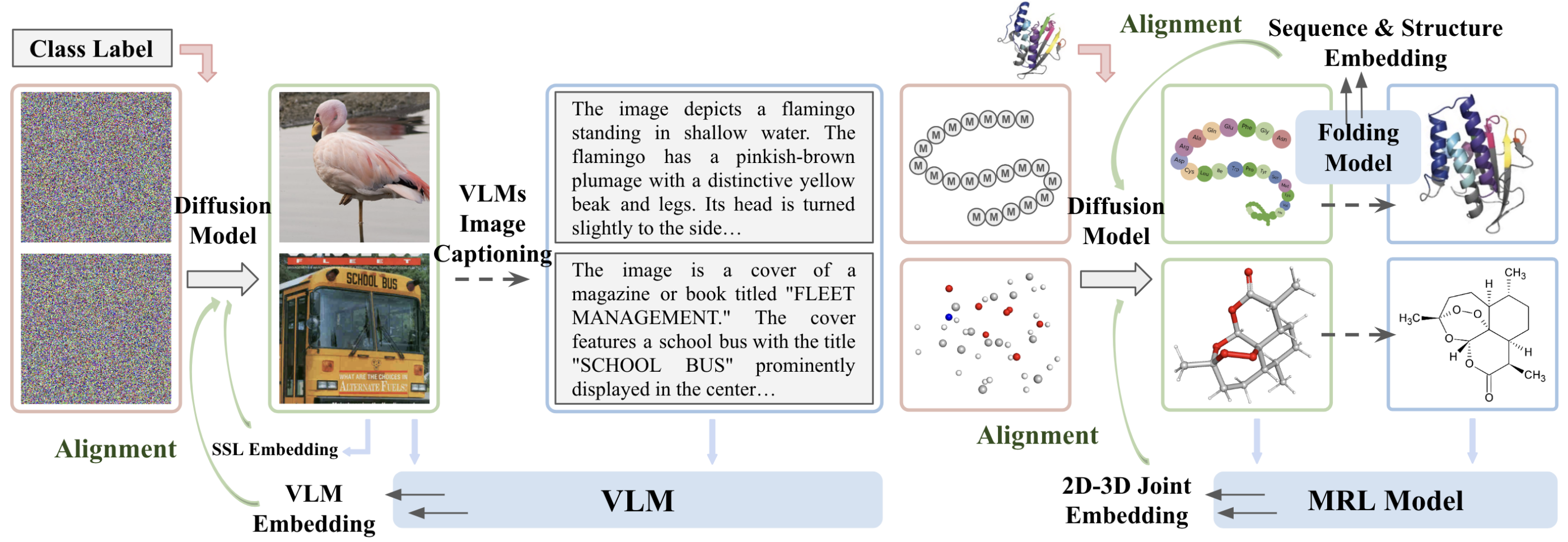

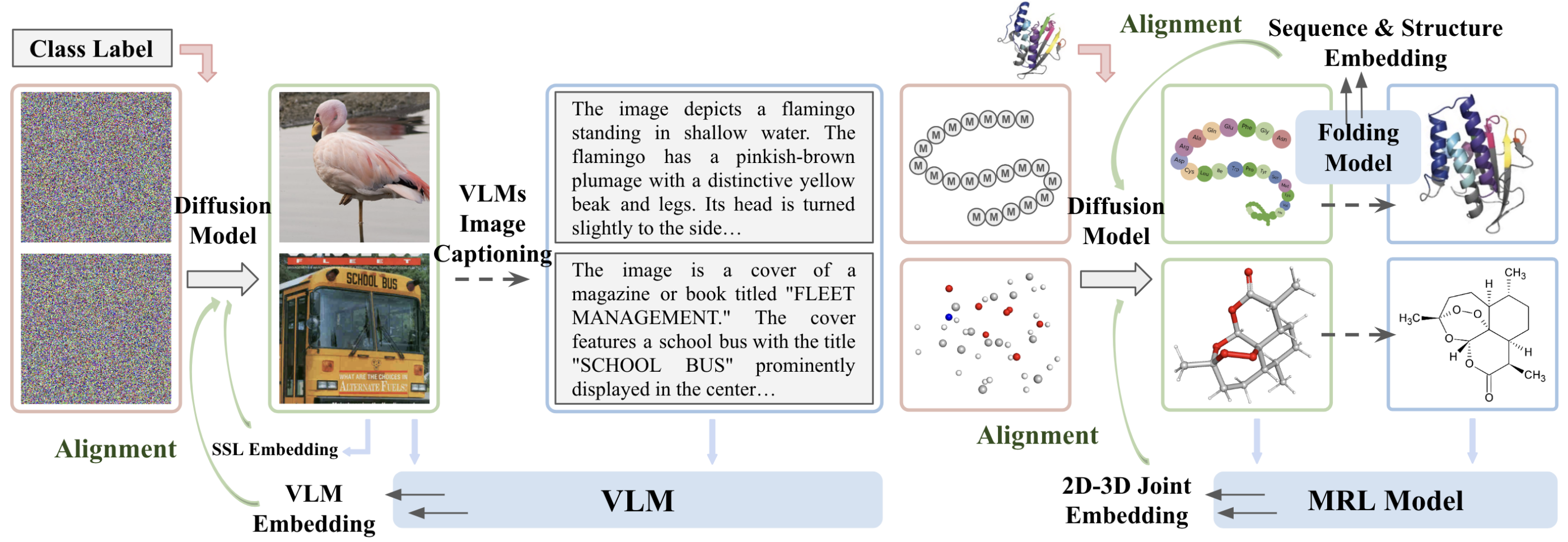

16. Learning Diffusion Models with Flexible Representation Guidance Chenyu Wang*, Cai Zhou*, Sharut Gupta, Zongyu Lin, Stefanie Jegelka, Stephen Bates, Tommi Jaakkola Advances in Neural Information Processing Systems. NeurIPS 2025. Also Oral at ICML 2025 FM4LS workshop. [Paper] [Code] [Project Webpage] |

|

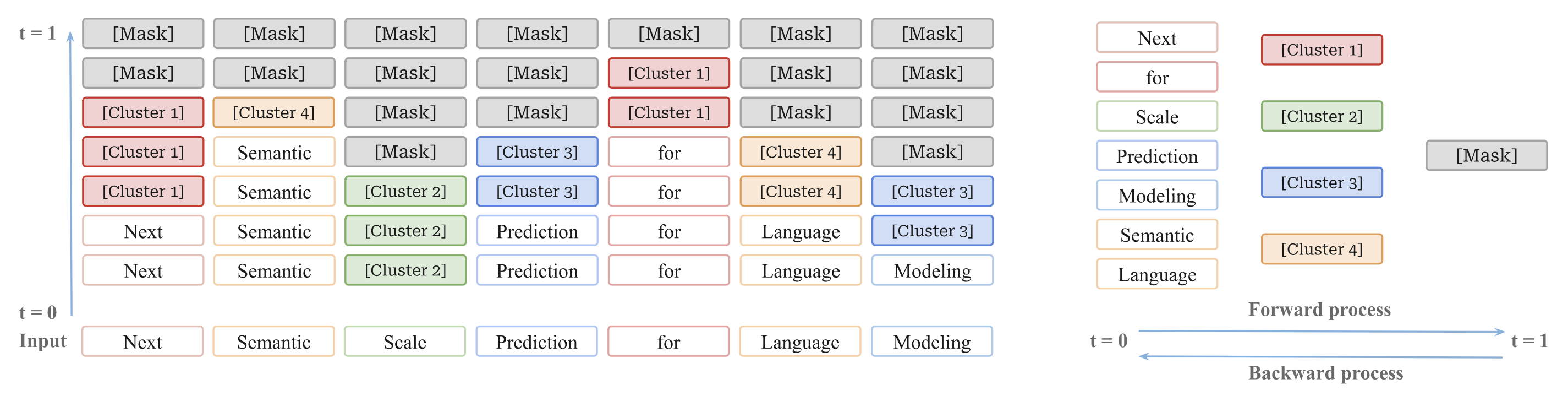

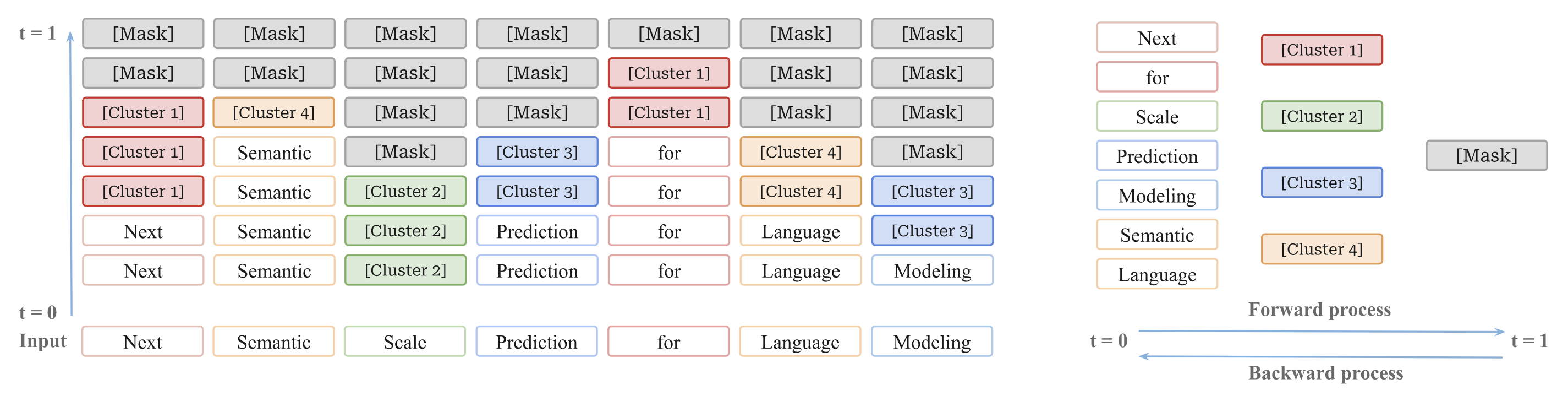

15. Next Semantic Scale Prediction via Hierarchical Diffusion Language Models Cai Zhou*, Chenyu Wang*, Dinghuai Zhang*, Shangyuan Tong, Yifei Wang, Stephen Bates, Tommi Jaakkola Advances in Neural Information Processing Systems. NeurIPS 2025. [Paper] [Code] |

|

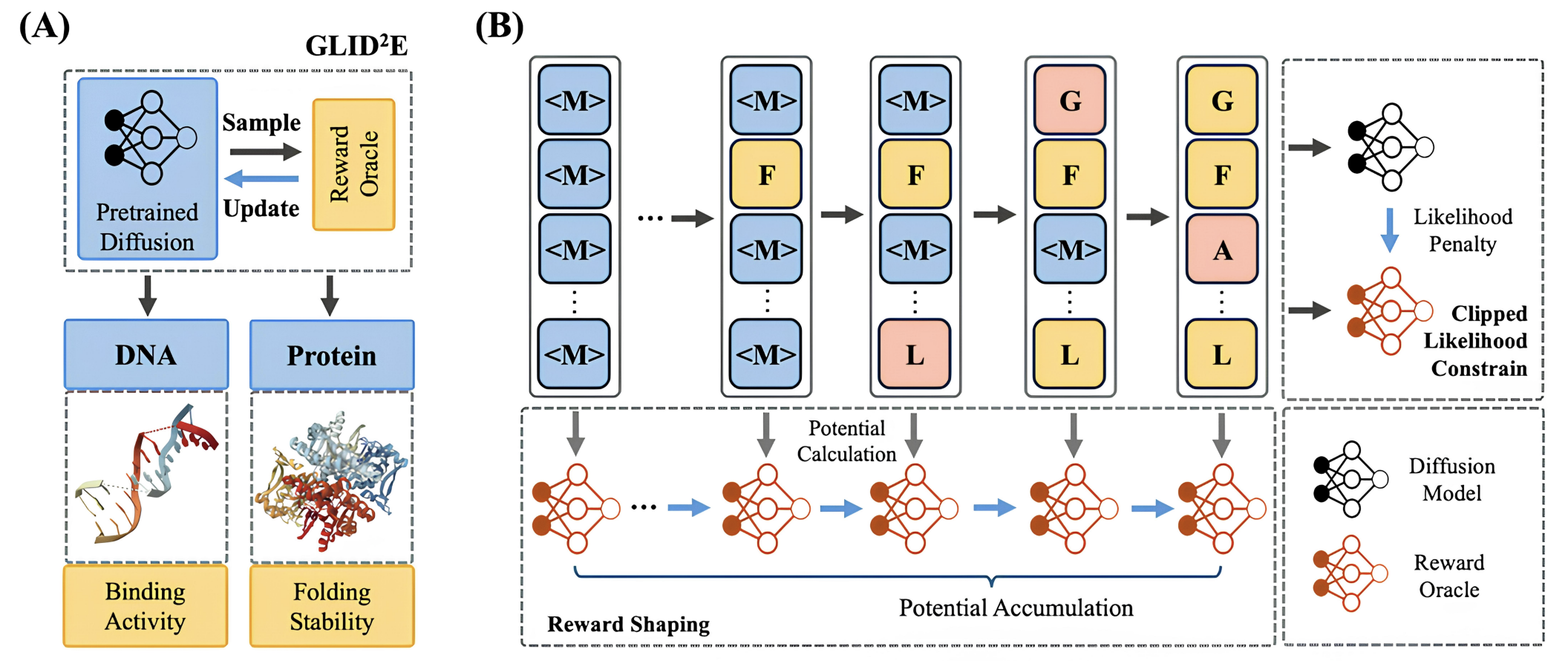

14. GLID$^2$E: A Gradient-Free Lightweight Fine-tune Approach for Discrete Sequence Design Hanqun Cao*, Haosen Shi*, Chenyu Wang, Sinno Jialin Pan, Pheng-Ann Heng Advances in Neural Information Processing Systems. NeurIPS 2025. [Paper] |

|

13. Derivative-Free Guidance in Continuous and Discrete Diffusion Models with Soft Value-Based Decoding Xiner Li, Yulai Zhao, Chenyu Wang, Gabriele Scalia, Gokcen Eraslan, Surag Nair, Tommaso Biancalani, Aviv Regev, Sergey Levine, Masatoshi Uehara Advances in Neural Information Processing Systems. NeurIPS 2025. [Paper] [Code] |

|

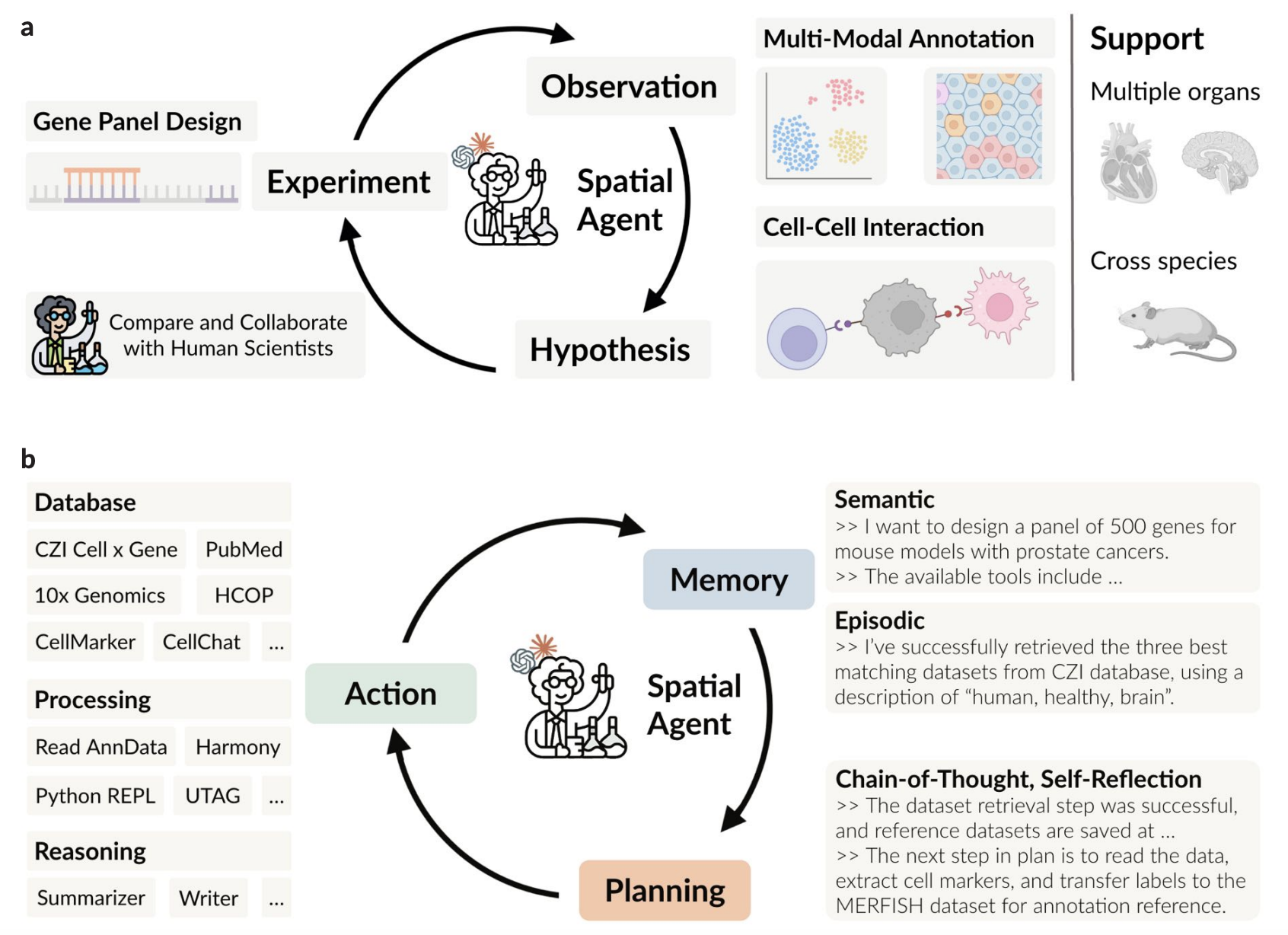

12. SpatialAgent: An Autonomous AI Agent for Spatial Biology Hanchen Wang*#, Yichun He*, Paula P Coelho*, Matthew Bucci*, ..., Chenyu Wang, ..., Aviv Regev# Preprint. [Paper] [Code] |

|

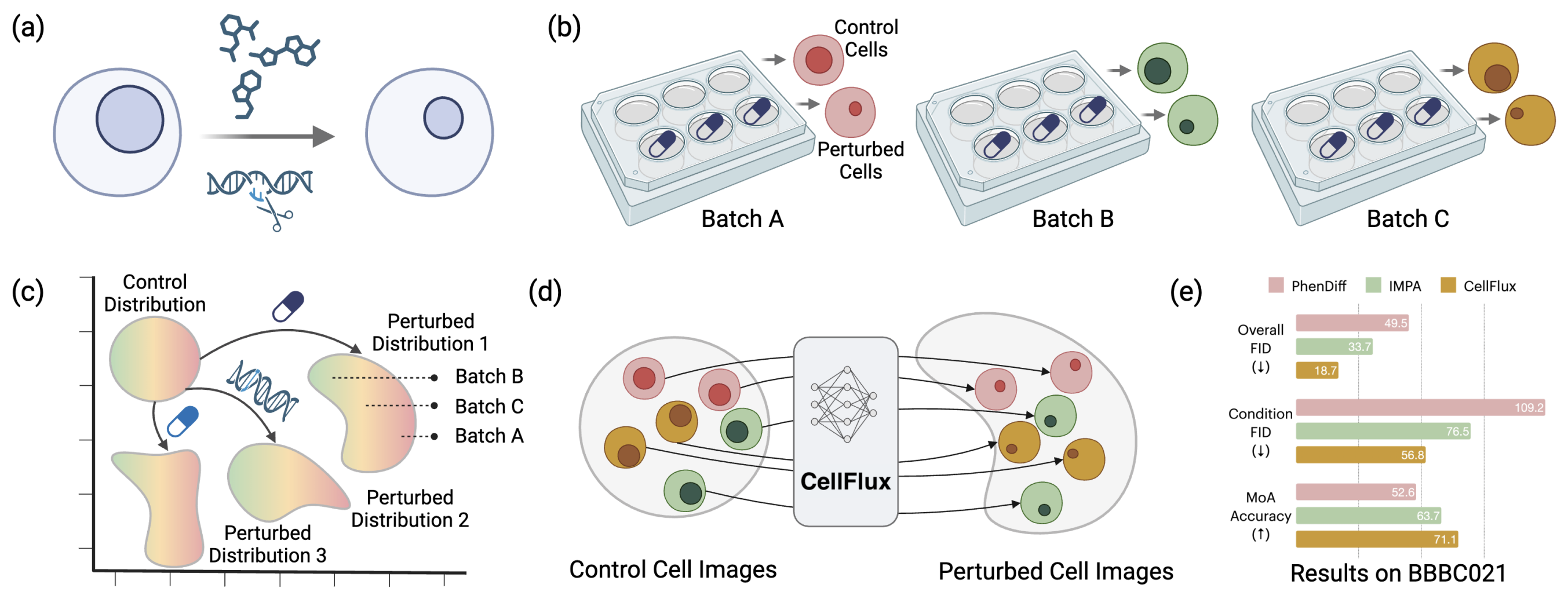

11. CellFlux: Simulating Cellular Morphology Changes via Flow Matching Yuhui Zhang*, Yuchang Su*, Chenyu Wang, Tianhong Li, Zoe Wefers, Jeffrey Nirschl, James Burgess, Daisy Ding, Alejandro Lozano, Emma Lundberg, Serena Yeung-Levy International Conference on Machine Learning. ICML 2025. [Paper] [Project Webpage] |

|

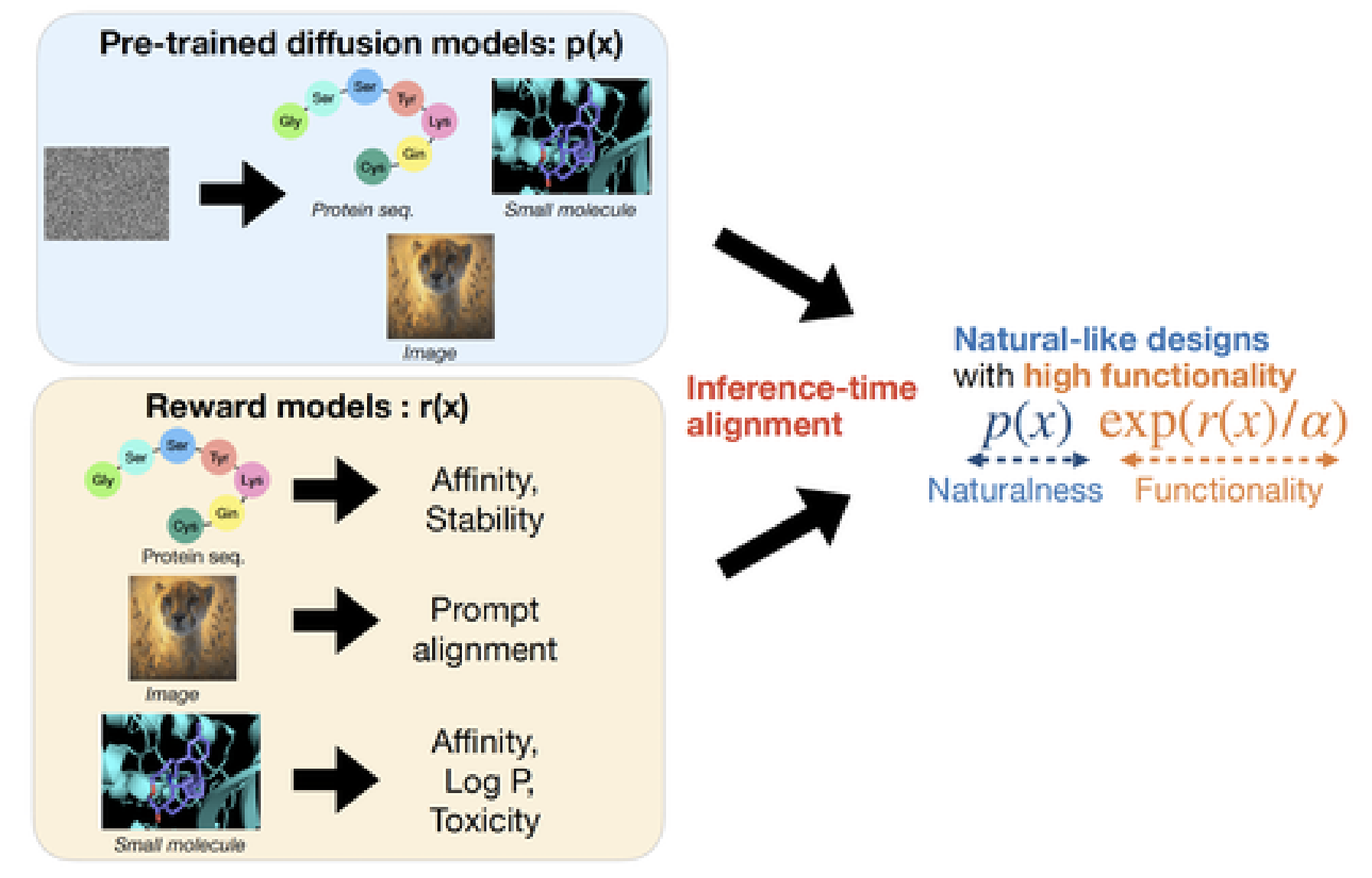

10. Inference-time Alignment in Diffusion Models with Reward-Guided Generation: Tutorial and Review Masatoshi Uehara, Yulai Zhao, Chenyu Wang, Xiner Li, Aviv Regev, Sergey Levine, Tommaso Biancalani Preprint. [Paper] [Code] |

|

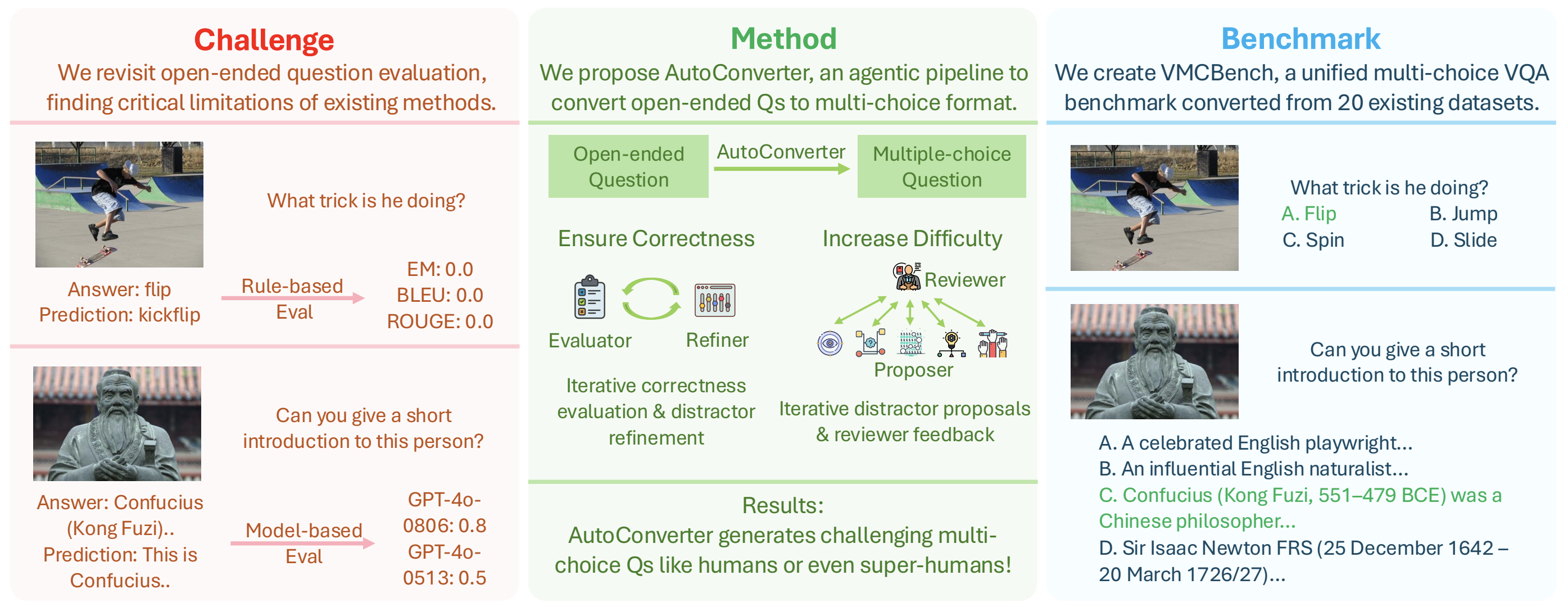

9. Automated Generation of Challenging Multiple-Choice Questions for Vision Language Model Evaluation Yuhui Zhang*, Yuchang Su*, Yiming Liu, Xiaohan Wang, James Burgess, Elaine Sui, Chenyu Wang, Josiah Aklilu, Alejandro Lozano, Anjiang Wei, Ludwig Schmidt, Serena Yeung-Levy IEEE Conference on Computer Vision and Pattern Recognition. CVPR 2025. [Paper] [Project Webpage] [Code] |

|

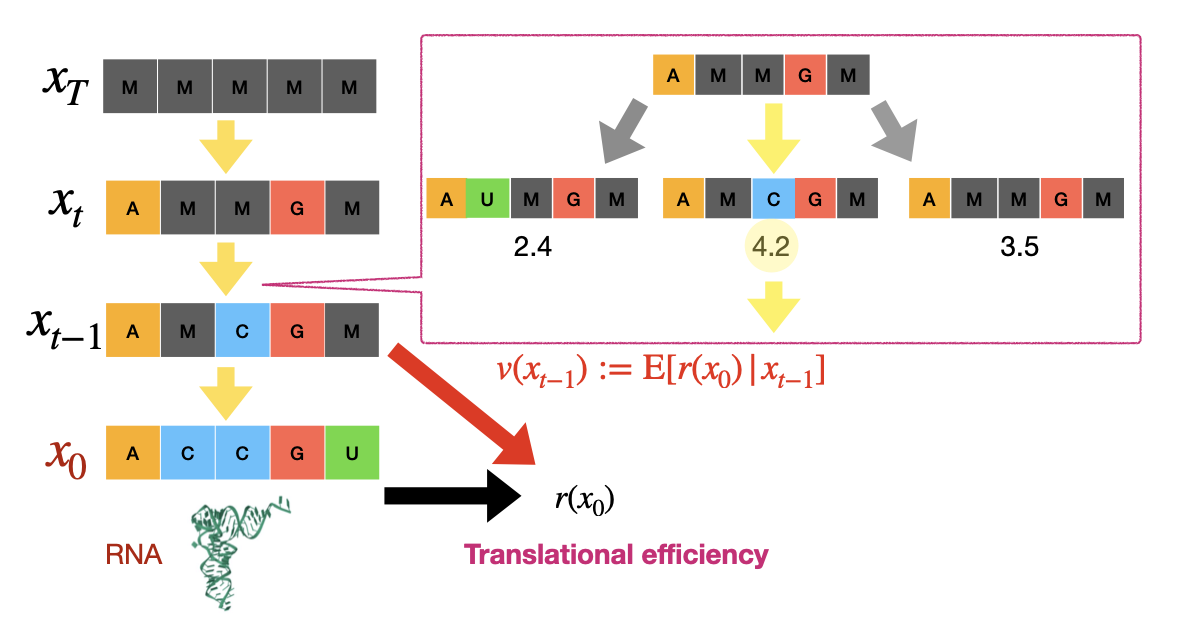

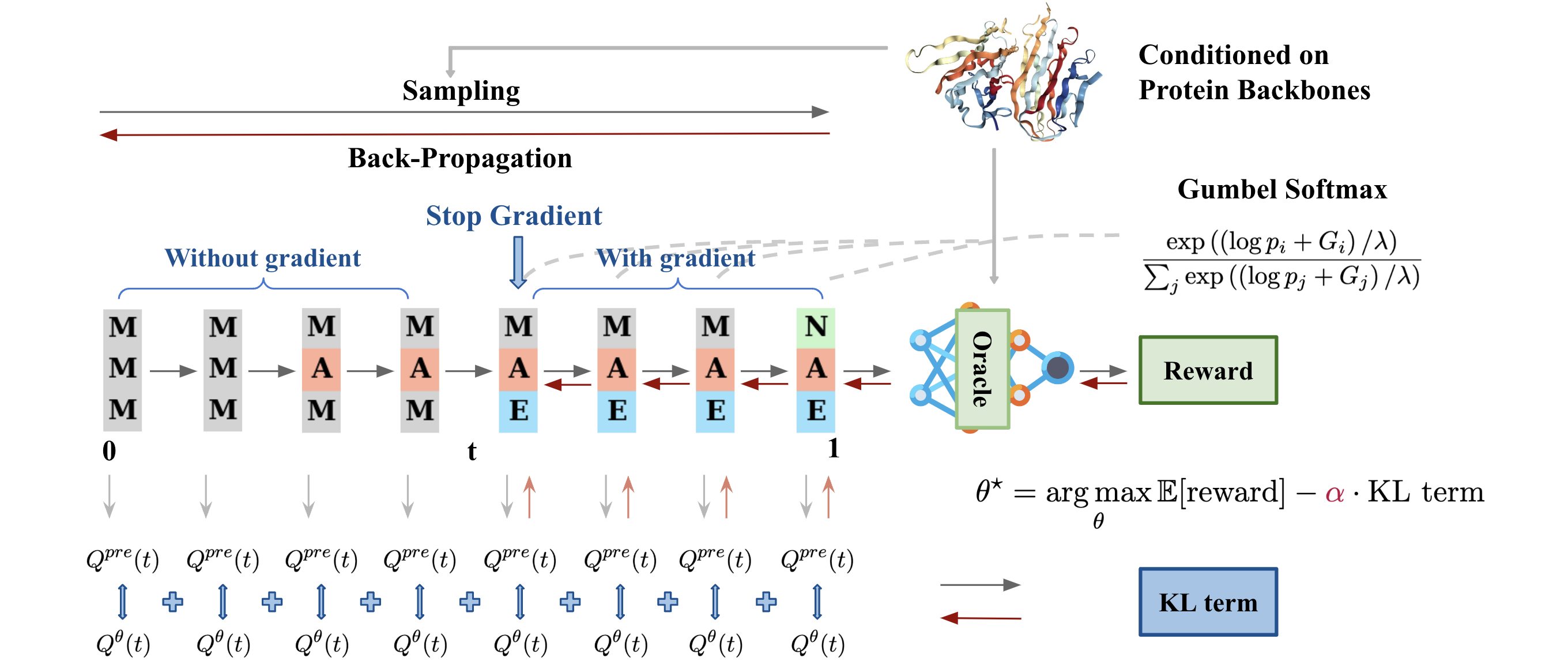

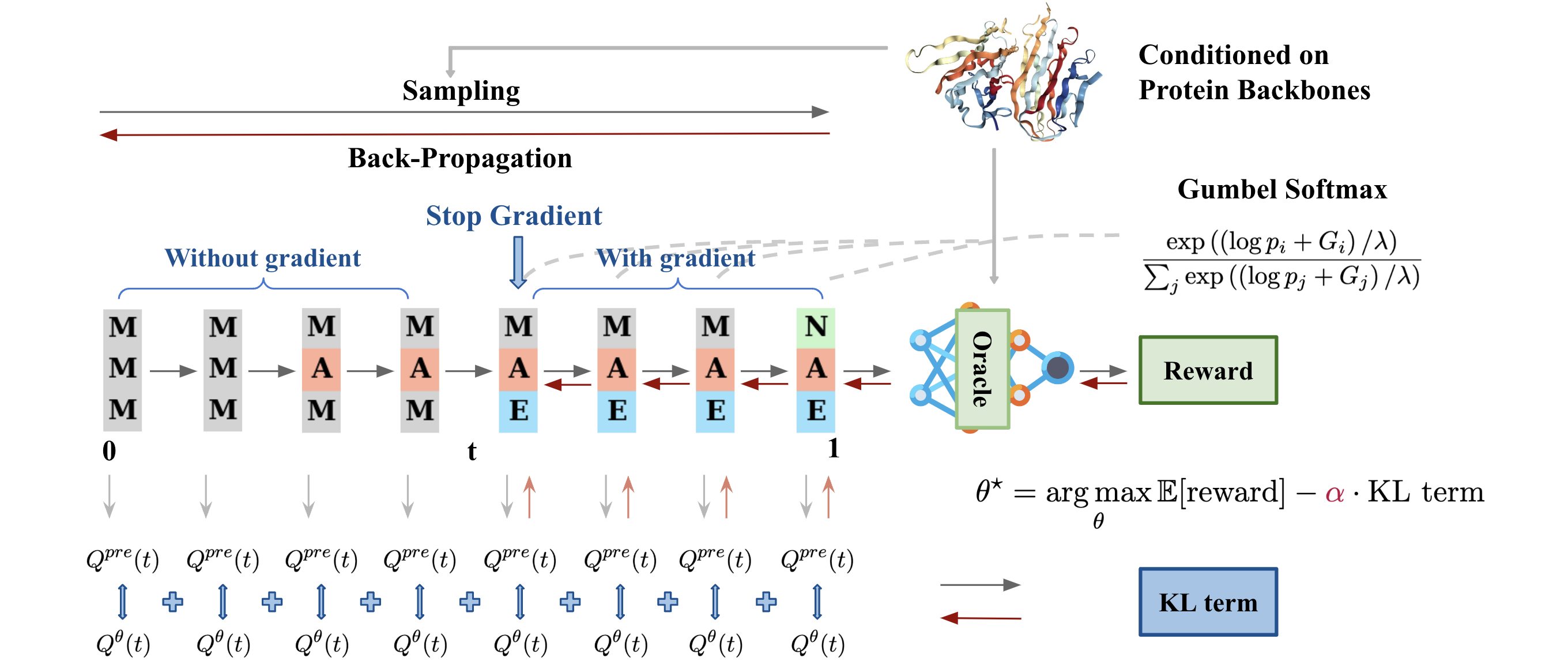

8. Fine-Tuning Discrete Diffusion Models via Reward Optimization with Applications to DNA and Protein Design Chenyu Wang*, Masatoshi Uehara*, Yichun He, Amy Wang, Tommaso Biancalani, Avantika Lal, Tommi Jaakkola, Sergey Levine, Hanchen Wang, Aviv Regev International Conference on Learning Representations. ICLR 2025. [Paper] [Code] |

|

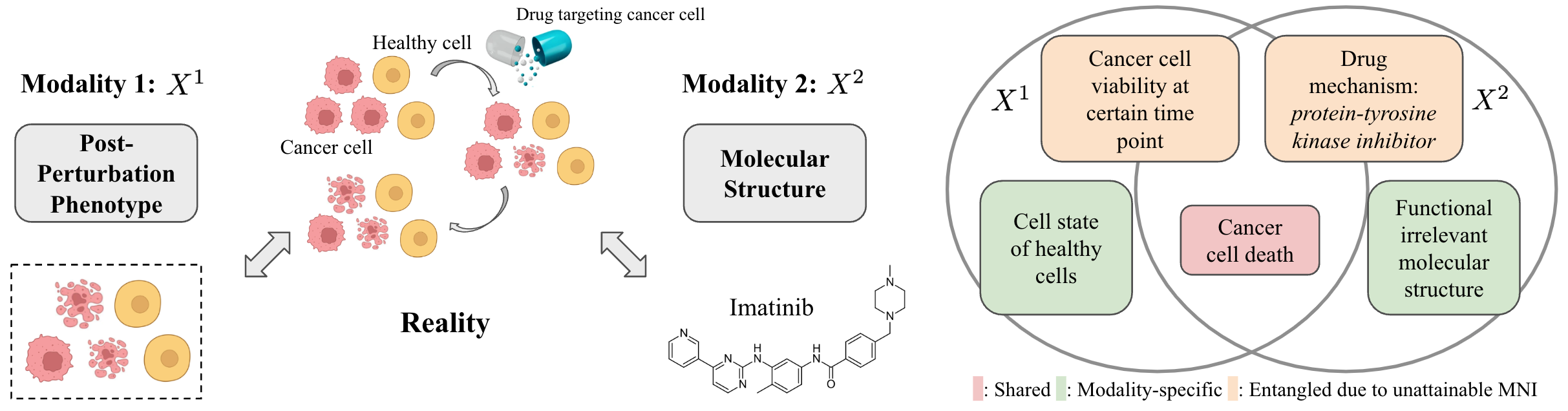

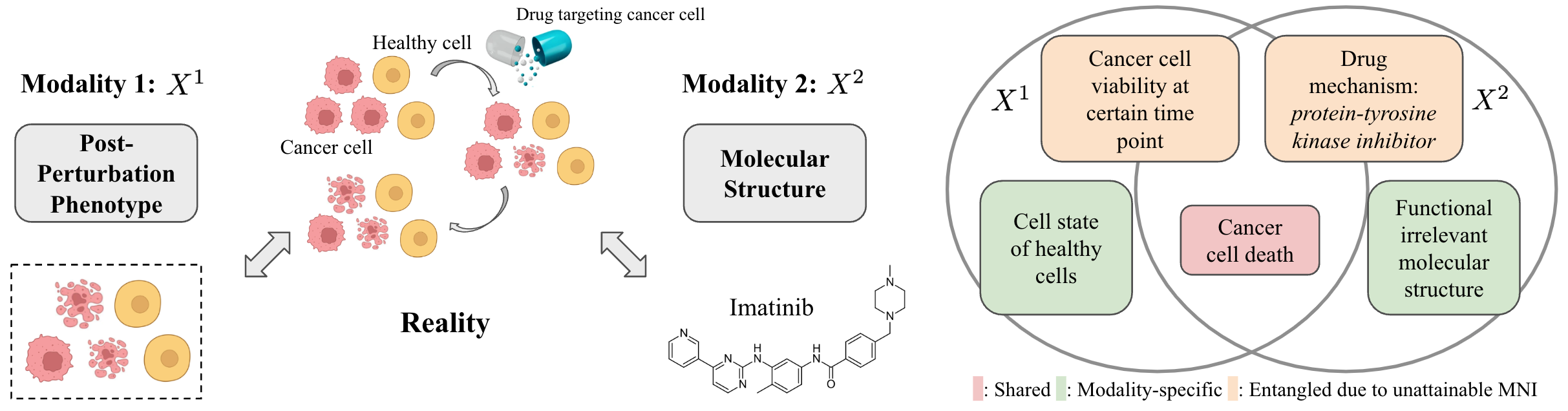

7. An Information Criterion for Controlled Disentanglement of Multimodal Data Chenyu Wang*, Sharut Gupta*, Xinyi Zhang, Sana Tonekaboni, Stefanie Jegelka, Tommi Jaakkola, Caroline Uhler International Conference on Learning Representations. ICLR 2025. Also Oral and Honorable Mention Award at NeurIPS 2024 UniReps workshop. [Paper] [Code] |

|

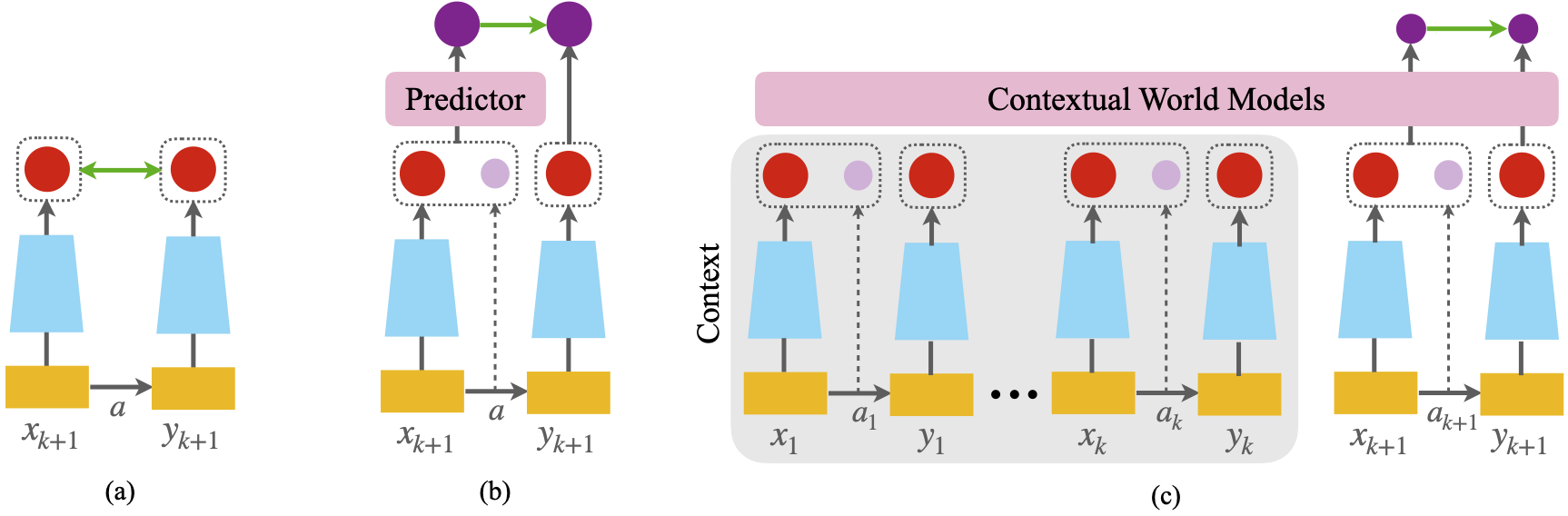

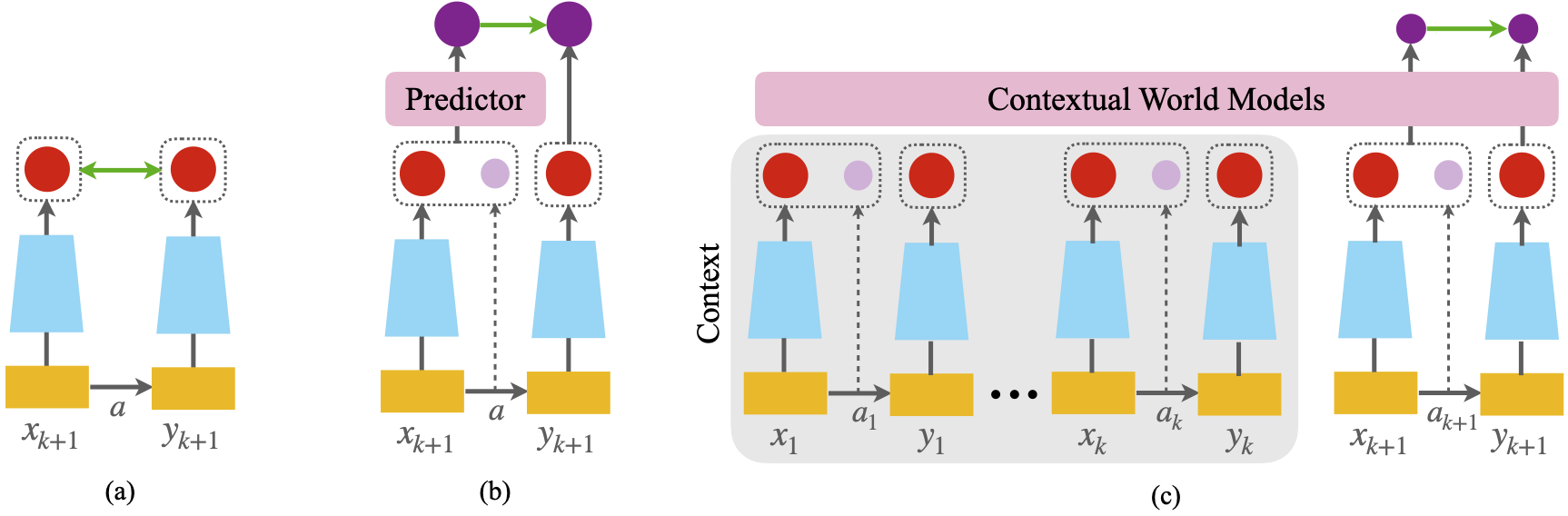

6. In-Context Symmetries: Self-Supervised Learning through Contextual World Models Sharut Gupta*, Chenyu Wang*, Yifei Wang*, Tommi Jaakkola, Stefanie Jegelka, Advances in Neural Information Processing Systems. NeurIPS 2024. Also Oral at NeurIPS 2024 SSL workshop. [Paper] [Code] [MIT CSAIL News] |

|

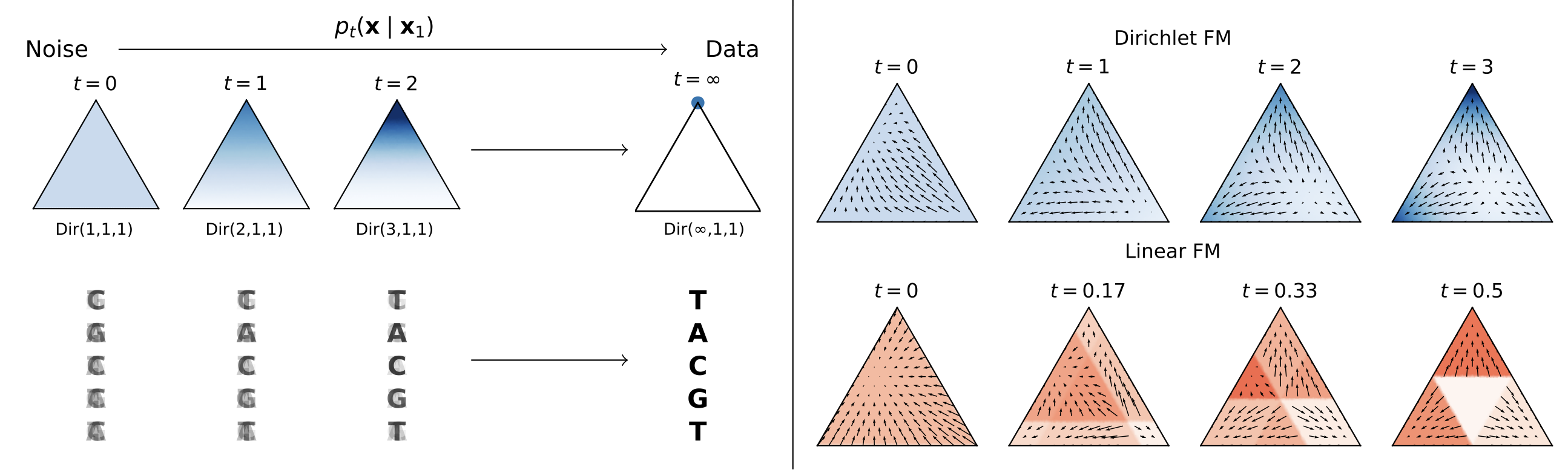

5. Dirichlet Flow Matching with Applications to DNA Sequence Design Hannes Stark*, Bowen Jing*, Chenyu Wang, Gabriele Corso, Bonnie Berger, Regina Barzilay, Tommi Jaakkola, International Conference on Machine Learning. ICML 2024. Also Oral at ICLR 2024 MLGenX workshop. [Paper] [Code] |

|

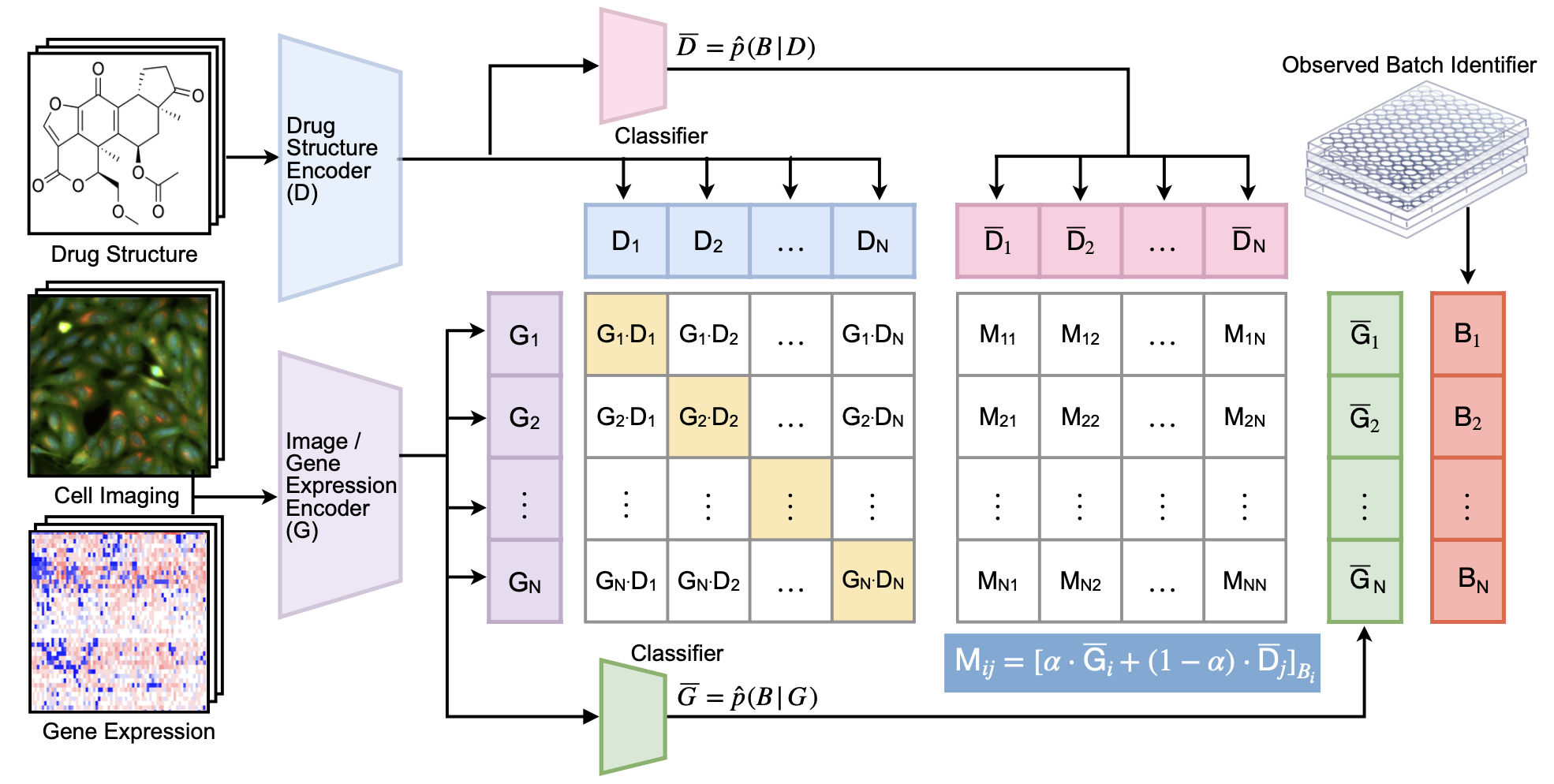

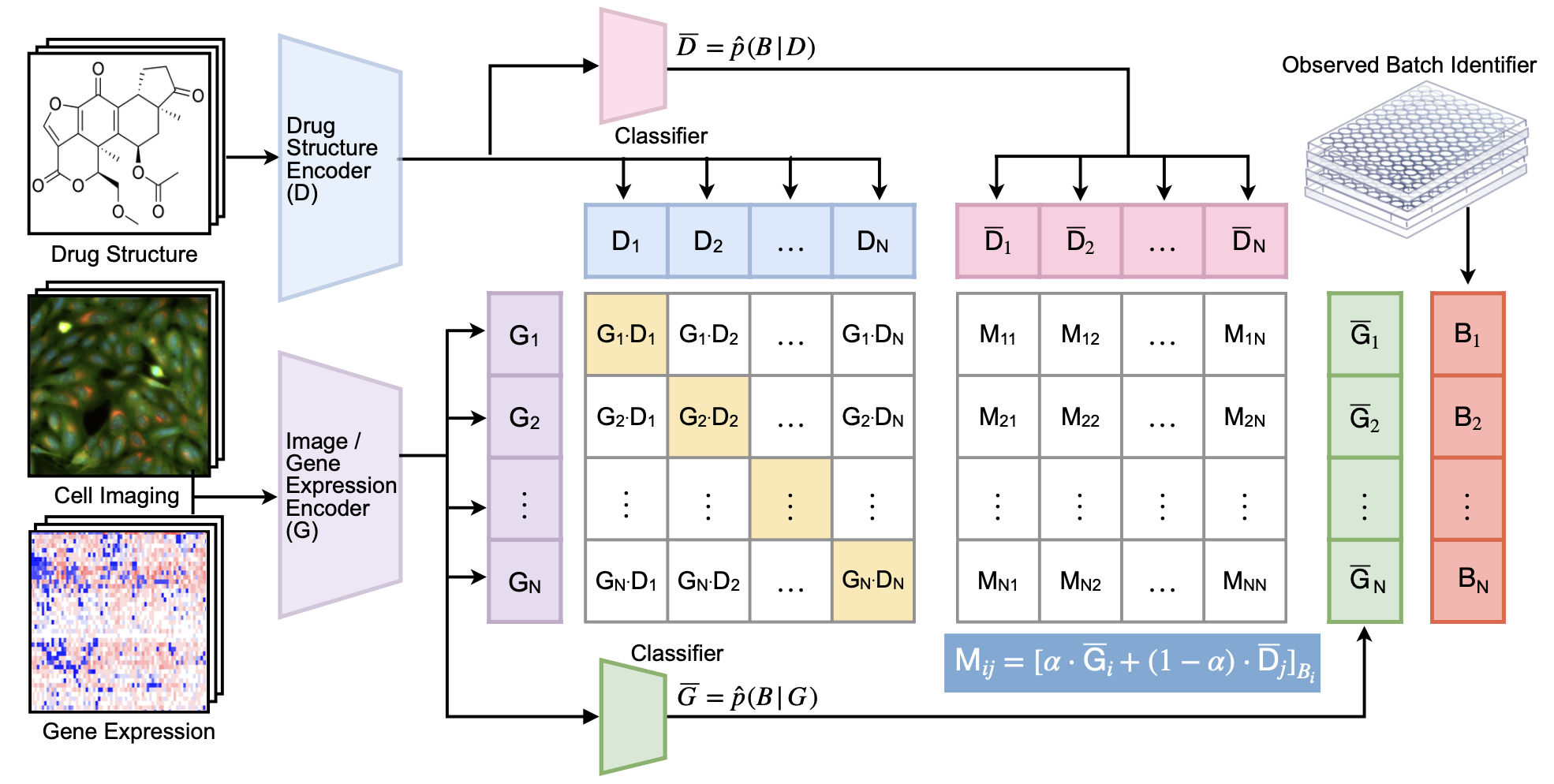

4. Removing Biases from Molecular Representations via Information Maximization Chenyu Wang, Sharut Gupta, Caroline Uhler, Tommi Jaakkola International Conference on Learning Representations. ICLR 2024. [Paper] [Code] |

|

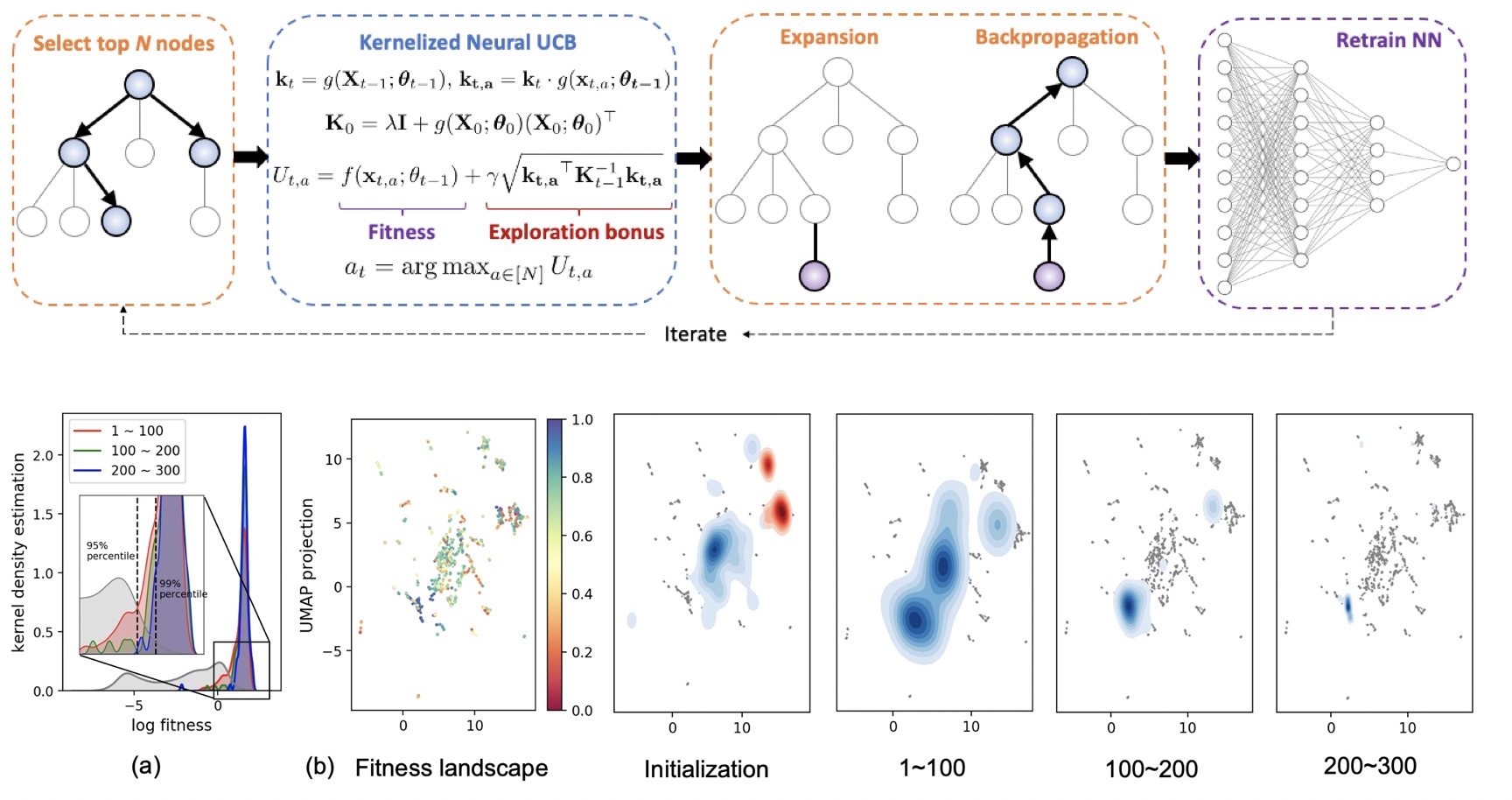

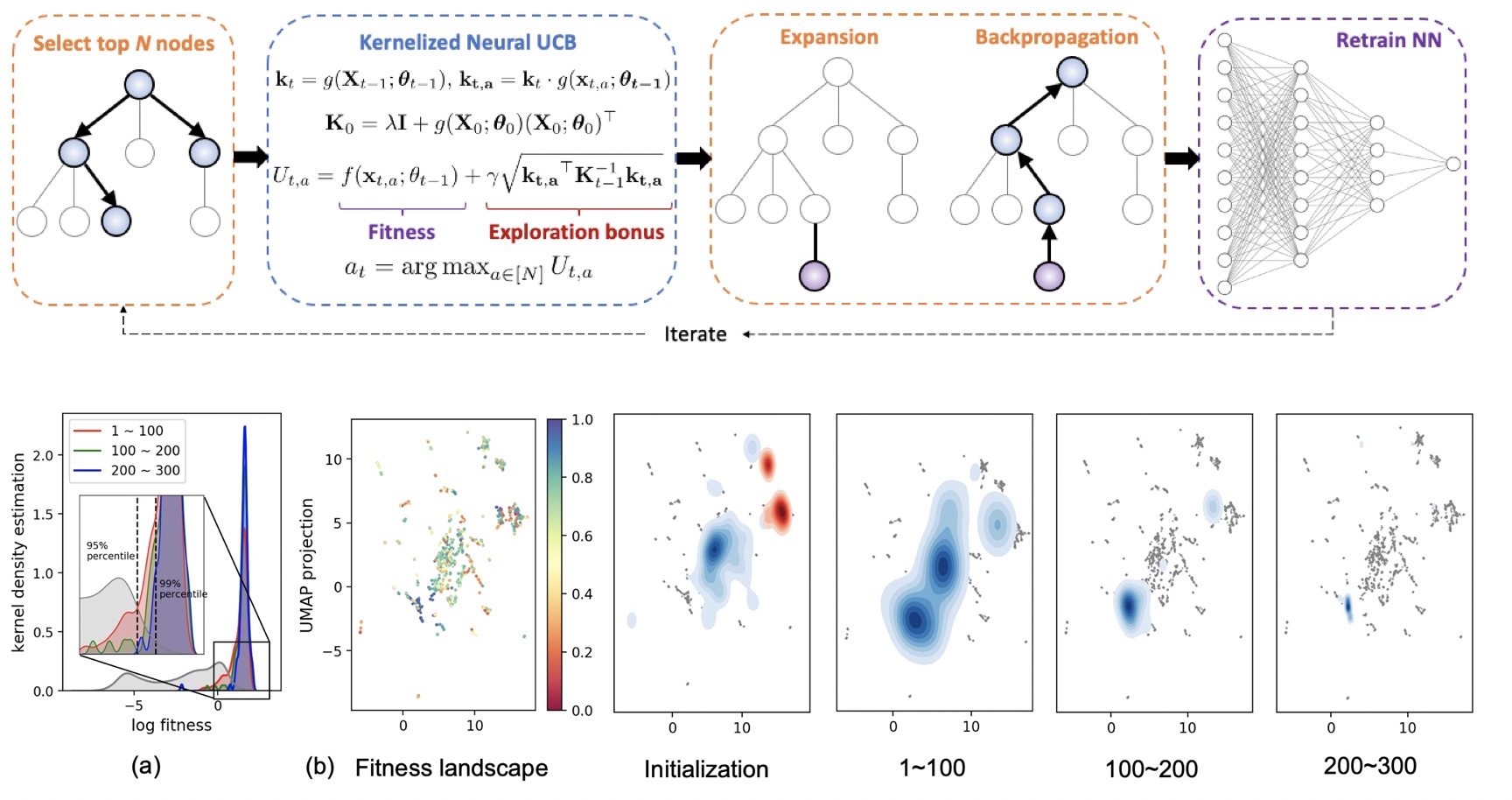

3. Tree-Based Neural Bandits for High-Value Protein Design Chenyu Wang*, Joseph Kim*, Le Cong, Mengdi Wang 56th Annual Conference on Information Sciences and Systems. CISS 2022. [Paper] |

|

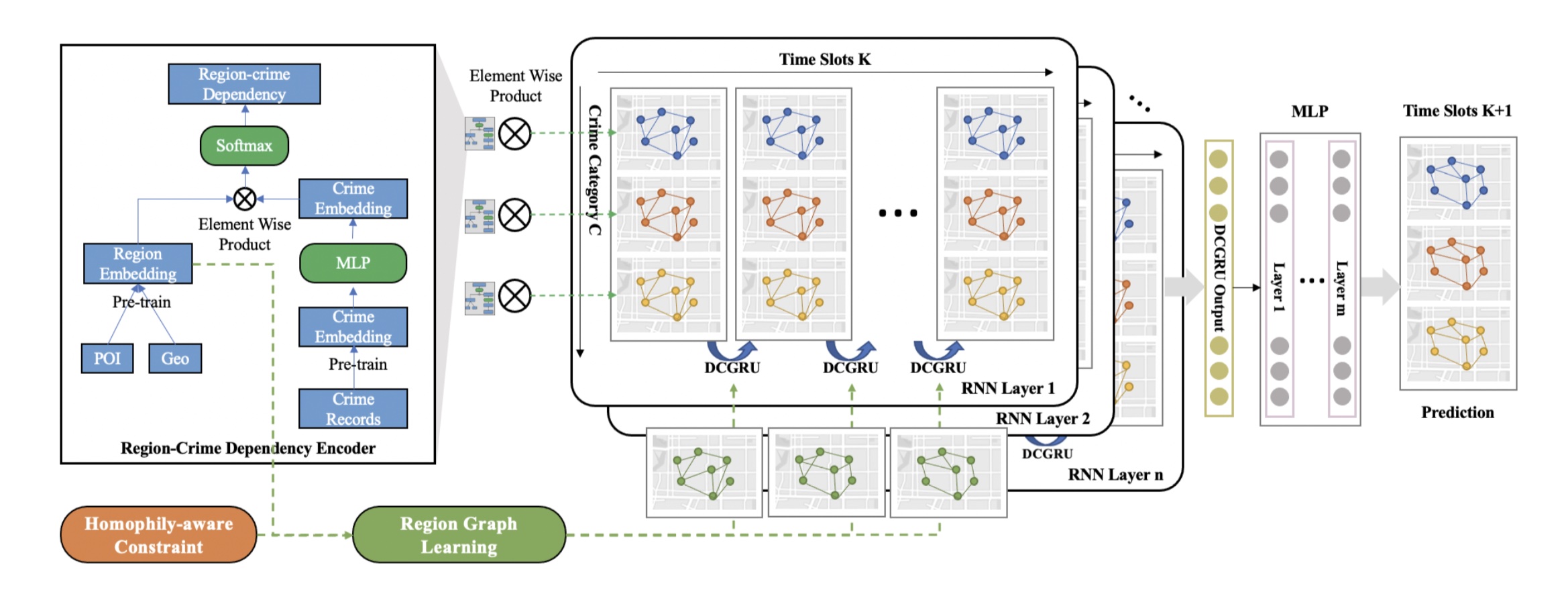

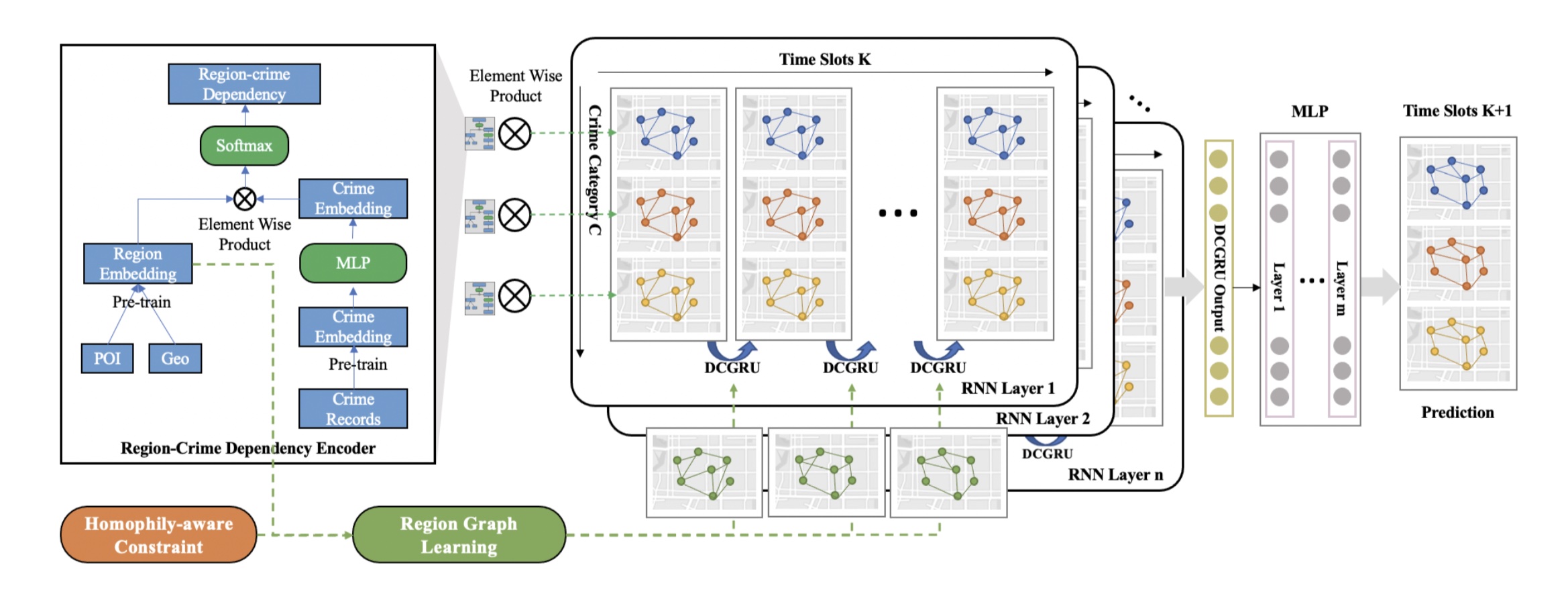

2. HAGEN: Homophily-Aware Graph Convolutional Recurrent Network for Crime Forecasting Chenyu Wang*, Zongyu Lin*, Xiaochen Yang, Mingxuan Yue, Jiao Sun, Cyrus Shahabi AAAI Conference on Artificial Intelligence. AAAI 2022. (Oral Presentation) [Paper] [Code] [Talk at TGL] |

|

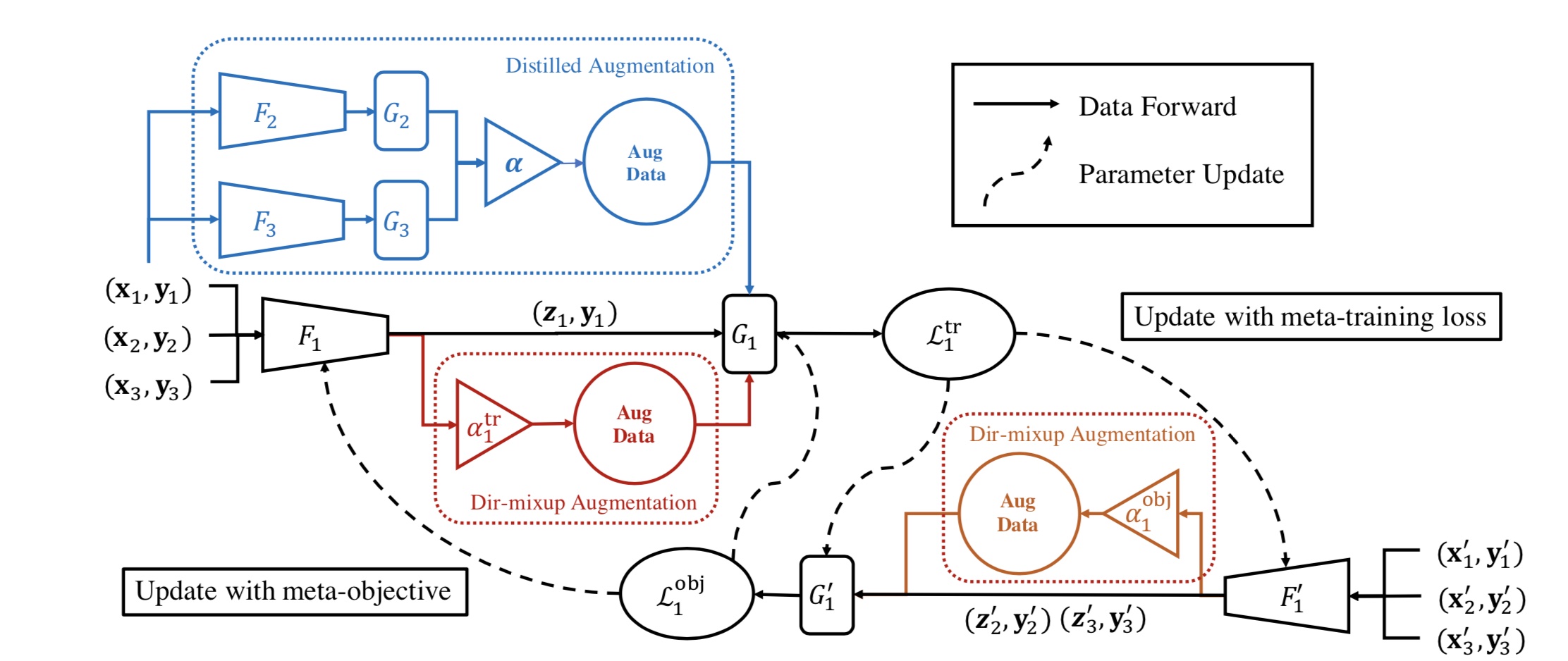

1. Open Domain Generalization with Domain-Augmented Meta-Learning Yang Shu*, Zhangjie Cao*, Chenyu Wang, Jianmin Wang, Mingsheng Long IEEE Conference on Computer Vision and Pattern Recognition. CVPR 2021. [Paper] [Code] |

|

21. SPG: Sandwiched Policy Gradient for Masked Diffusion Language Models. Chenyu Wang, Paria Rashidinejad, DiJia Su, Song Jiang, Sid Wang, Siyan Zhao, Cai Zhou, Shannon Zejiang Shen, Feiyu Chen, Tommi Jaakkola, Yuandong Tian, Bo Liu. International Conference on Learning Representations. ICLR 2026. [Paper] [Code] [Project Webpage] |

|

16. Learning Diffusion Models with Flexible Representation Guidance Chenyu Wang*, Cai Zhou*, Sharut Gupta, Zongyu Lin, Stefanie Jegelka, Stephen Bates, Tommi Jaakkola Advances in Neural Information Processing Systems. NeurIPS 2025. Also Oral at ICML 2025 FM4LS workshop. [Paper] [Code] [Project Webpage] |

|

15. Next Semantic Scale Prediction via Hierarchical Diffusion Language Models Cai Zhou*, Chenyu Wang*, Dinghuai Zhang*, Shangyuan Tong, Yifei Wang, Stephen Bates, Tommi Jaakkola Advances in Neural Information Processing Systems. NeurIPS 2025. [Paper] [Code] |

|

8. Fine-Tuning Discrete Diffusion Models via Reward Optimization with Applications to DNA and Protein Design Chenyu Wang*, Masatoshi Uehara*, Yichun He, Amy Wang, Tommaso Biancalani, Avantika Lal, Tommi Jaakkola, Sergey Levine, Hanchen Wang, Aviv Regev International Conference on Learning Representations. ICLR 2025. [Paper] [Code] |

|

7. An Information Criterion for Controlled Disentanglement of Multimodal Data Chenyu Wang*, Sharut Gupta*, Xinyi Zhang, Sana Tonekaboni, Stefanie Jegelka, Tommi Jaakkola, Caroline Uhler International Conference on Learning Representations. ICLR 2025. Also Oral and Honorable Mention Award at NeurIPS 2024 UniReps workshop. [Paper] [Code] |

|

6. In-Context Symmetries: Self-Supervised Learning through Contextual World Models Sharut Gupta*, Chenyu Wang*, Yifei Wang*, Tommi Jaakkola, Stefanie Jegelka, Advances in Neural Information Processing Systems. NeurIPS 2024. Also Oral at NeurIPS 2024 SSL workshop. [Paper] [Code] [MIT CSAIL News] |

|

4. Removing Biases from Molecular Representations via Information Maximization Chenyu Wang, Sharut Gupta, Caroline Uhler, Tommi Jaakkola International Conference on Learning Representations. ICLR 2024. [Paper] [Code] |

|

3. Tree-Based Neural Bandits for High-Value Protein Design Chenyu Wang*, Joseph Kim*, Le Cong, Mengdi Wang 56th Annual Conference on Information Sciences and Systems. CISS 2022. [Paper] |

|

2. HAGEN: Homophily-Aware Graph Convolutional Recurrent Network for Crime Forecasting Chenyu Wang*, Zongyu Lin*, Xiaochen Yang, Mingxuan Yue, Jiao Sun, Cyrus Shahabi AAAI Conference on Artificial Intelligence. AAAI 2022. (Oral Presentation) [Paper] [Code] [Talk at TGL] |

Education and Research Experience

|

Massachusetts Institute of Technology 2022.08-Present PhD student in Computer Science Advisor: Tommi Jaakkola |

|

Meta FAIR 2025.05-2025.08 Research intern Advisor: Yuandong Tian, Bo Liu |

|

Genentech 2024.05-2024.08 Research intern Advisor: Aviv Regev Mentor: Hanchen Wang, Masatoshi Uehara |

|

Tsinghua University 2018.08-2022.06 B.S. in Economics Minor in Data Science and Technology Advisor: Mingsheng Long Mentor: Yang Shu |

|

Princeton University 2021.06-2021.12 Research intern Advisor: Mengdi Wang, Le Cong Mentor: Joseph Kim, Huazheng Wang |

|

University of Southern California 2021.01-2021.06 Research intern Advisor: Cyrus Shahabi Mentor: Jiao Sun, Mingxuan Yue |

Selected Awards

- 2025 Citadel GQS PhD Fellowship (the only recipient in EECS)

- 2025 D. E. Shaw Research Doctoral Fellowship

- 2024 Honorable Mention Award, NeurIPS 2024 UniReps Workshop

- 2022 MIT EECS Great Educators Fellowship

- 2022 Outstanding Undergraduate in Tsinghua (2% in Tsinghua)

- 2022 Outstanding Undergraduate in Beijing

- 2022 Chen Daisun Scholarship (3 in Tsinghua SEM)

- 2022 Undergraduate Commencement Student Speaker of Tsinghua SEM

- 2021 Meritorious Winner in MCM/ICM Mathematical Contest in Modelling

- 2020 Tang Lixin Scholarship (50 in Tsinghua)

- 2019 National Scholarship (0.2% in China)

- 2019 Athletics Excellence Scholarship of Tsinghua

- 2018 Gold medalist of 50th International Chemistry Olympiad (4 in China, 6th place in the world)

- 2016 Silver medalist of 15th China Girl’s Mathematical Olympiad (50 in China)

Internship Experience

- Meta FAIR, Research Intern, May 2025 - Aug. 2025

- Genentech, Research Intern at Regev Lab, May 2024 - Aug. 2024

- Jane Street, Quantitative Trading Intern (return offer extended), Jun. 2021 - Sept. 2021

- WizardQuant Capital Management, Quantitative Research Intern, Jun. 2020 - Aug. 2020

- Techsharpe Quant Capital Management, Data Analyst Intern, Jan. 2020 - Feb. 2020

Services

Reviewer: ICLR 2025/2026, NeurIPS 2024/2025, ICML 2025, PLOS Computational Biology

Miscellaneous

I enjoy (and perhaps good at) doing sports. During undergrad, I was an active member in the track team and soccer team in my school, getting 1st place in 4*400m relay, 3rd place in 1500m, women’s soccer champion etc. I’m also a fan of literature and classical music. I enjoy travelling and tasting local delicacies.

Powered by Jekyll and Minimal Light theme.